This blog post is a companion article to my latest talk title « Not a Kubernetes fan? The state of PaaS in 2024 » – presented for the first time at DevNexus 2024.

This post was updated in February 2025 before giving the talk at ConFoo 2025 – here is a link to the slides.

A Platform As A Service is commonly known as a remote environment ready to to deploy your apps: (I invite you to read this definition by CloudFlare)

- turning your source code into a deployed app,

- binding databases and/or message queues to your app,

- scaling and upgrading your app

- forwarding ingress traffic to your app

- etc.

We can see the first PaaS appear as far as… the end of the 90s 😱 🧓, when uploading PHP files via FTP to your ISP, and connecting to a shared MySQL database allowed you to deploy blogs, wikis, etc. Granted, it wasn’t really flexible at that time; but still, it was convenient and it kinda worked!

More seriously though, Heroku in the late 2000’s was one of the first to integrate with a developer flow: git push to deploy, a variety of choices for data persistence and a variety of choices for the programming language.

CloudFoundry soon after took that experience to your own infrastructure; with the cf push from your source to an URL.

Since then, happened the rise of the (Docker) Container Image, Kubernetes, and the Cloud Providers (AWS, Azure, GCP, etc.)

Did they make things simpler for the developer? Well, being able to interact with almost an infinity of infrastructure sure was exciting, but past the initial EC2 instance created, or Pod or even Lambda, developers found out there was more than infrastructure required to make developing and deploying an application an efficient workflow.

Ingress Controllers, API Gateways, Services, Authorization and Authentication, wiring Databases and Message Queues to the apps, and… wiring other apps (microservices) to each other… So much complexity that developers, myself included, began missing that simple git push or cf push experience – although very often developers love spending time figuring out and automating efficient ways to deploy to a given infrastructure; but is it the best usage of internal workforce?

What’s wrong with Kubernetes?

Nothing really: it’s a great API and Container Orchestration platform.

But turning Kubernetes into a PaaS (Platform As A Service – allowing the developers to focus on their code) is not as easy as it sounds like.

When an application is deployed into a Pod, there’s some plumbing involved to get traffic into the app; that’s already some complexity that a PaaS should solve

But then, once it’s deployed, it needs also to be maintained with the proper pipelines in place to assure a peaceful rollout of new versions

PaaS: the list

There are so many PaaS available in 2024; let me explain how I got to the list(you’re welcome in the comments to ask me to add any I missed!)

- Browsing HackerNews PaaS posts and grabbing links from those posts.

- Looking at what famous Developer Advocates used

- Investigating what major cloud providers offer(ed)

And then, I filtered them…

The non goals of this review

I won’t look into details at the costs; that said I found an interesting link in an Hacker News thread comparing all PaaS

I won’t look into logging / monitoring or tracing capabilities neither; at least not in detail. (because usually PaaS provide some of those, at varying levels)

PaaS that were not tested

The frontend PaaS’es

Well, I had to make choices for the presentation to keep it « under control » so I decided to focus on backend PaaS (specially java compatible, but usually when they support Java, they support a bunch of other backend languages / frameworks) and I not evaluate frontend PaaS – from my quick glance though, those are the main ones:

- Vercel – Angular, React, NextJS (with server side rendering)

- CloudFlare (with its workers)

- Netlify – NextJS and others (with server side rendering)

Cloud Dev. environments

While they sometimes look like PaaS, cloud dev. environments such as Repl.it (this one is special though, because you can deploy too), idx.dev (frontend-only), codesandbox (frontend only) are a particular niche that I did not test out.

The specialized PaaS

CloudFoundry based PaaS

CloudFoundry has been around since 2010? and is still rocking!

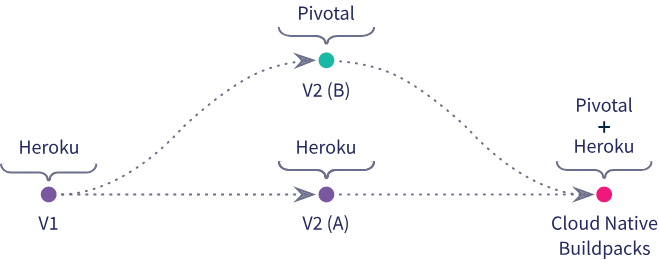

CloudFoundry can be installed on your (cloud) infrastructure via BOSH and then developers can start using it, most probably with the Java Buildpack (v2 as more recently Cloud Native Buildpacks, v3, were created as we’ll see later – for more info on the history, you can checkout a previous presentation I gave about buildpacksh)

There is some « magic » involved with CloudFoundry Java Buildpack so that the developer does not need to ever specify the JDBC datasource url, nor username nor password – the magic in that case is the java-cfenv library that is injected into the classpath at buildtime and will be able to resolve all those crucial properties at runtime.

If you want to quickly evaluate it, you can try and use anynines, a CloudFoundry provider; when you’re ready to install it on your infra, you should definitely checkout Tanzu Application Service (formerly known as Pivotal CloudFoundry)

Heroku

Heroku is the OG, there’s no other way to put it.

There was a big shock when they announced they abandoned their free tier, after all, not only they hosted probably millions of hobby projects, but very serious ones as well!

Their particularity is to use « dynos », some sort of containers, for isolation, and their ease of use that is still impressive.

They maintain and support their own CNB Buildpacks, the Heroku Buildpacks.

Fly.io

Fly.io , is, I believe, the successor to Heroku.

Really developer focused, with a more limited UI and magic, but not expensive at all and supporting a wide range of languages and uses cases.

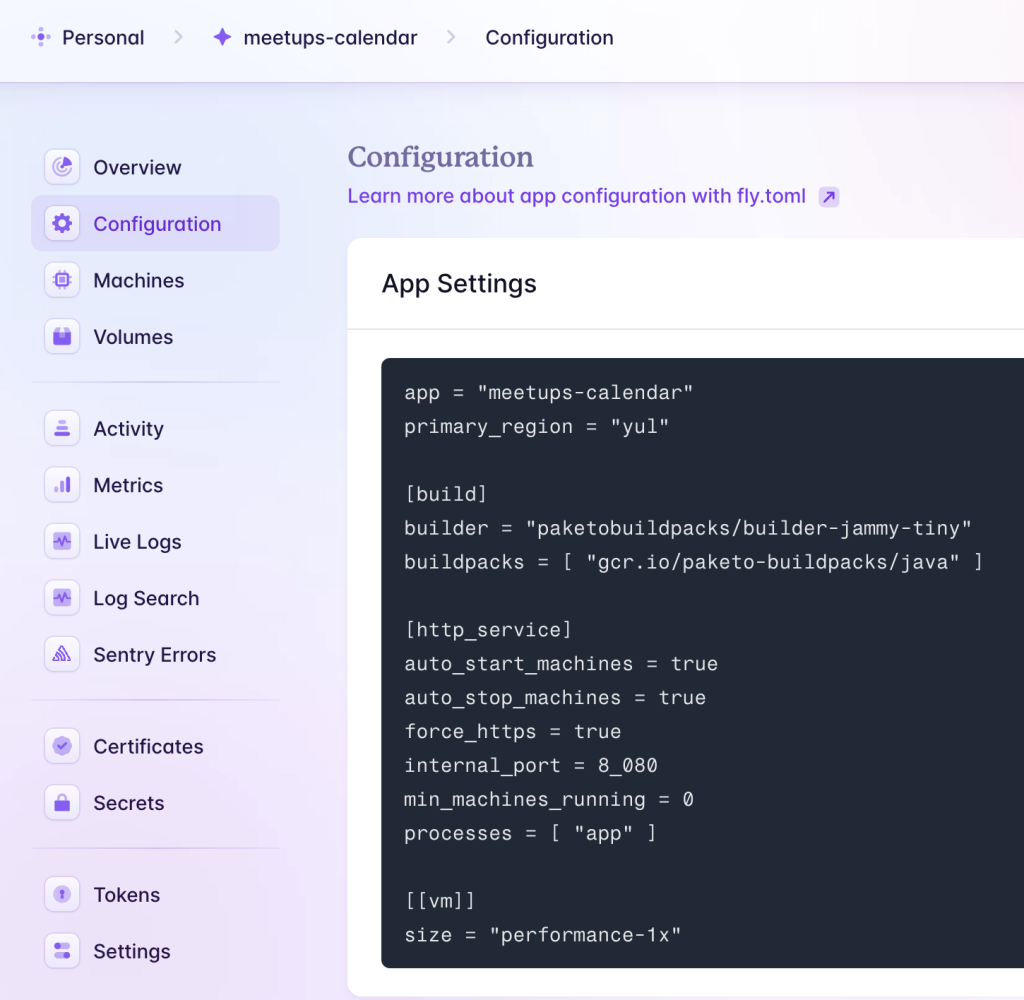

They have developed their offer supporting Dockerfiles as well as CNB Buildpacks (their documentation mentions how to use Paketo Buildpacks) and they run their workloads on FireCracker VMs, not containers.

They are frequently mentioned on HackerNews thanks to their continued innovation

Render

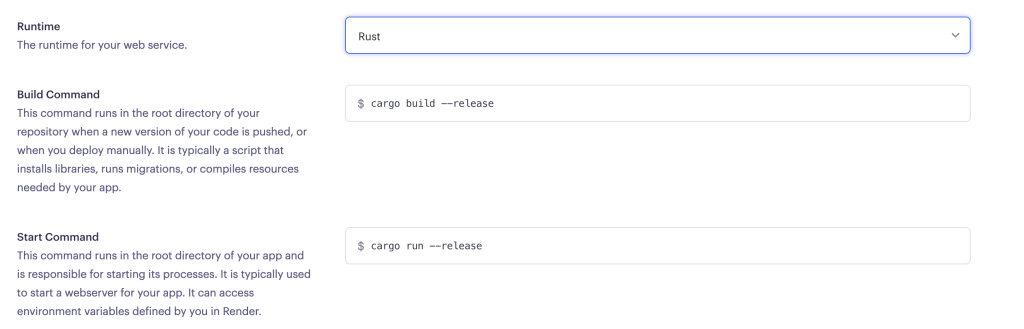

Dockerfiles only for Java 😢; only supported backend languages supported are: Go, Node, Python3, Elixir, Rust and Ruby

The way those languages are supported is specifying a build command and a run command

Porter

Porter is interesting because it allows you to « eject » to your own Cloud account; meaning that you can start using their infrastructure and then decide to still use their PaaS but on your own AWS/Azure/GCP account.

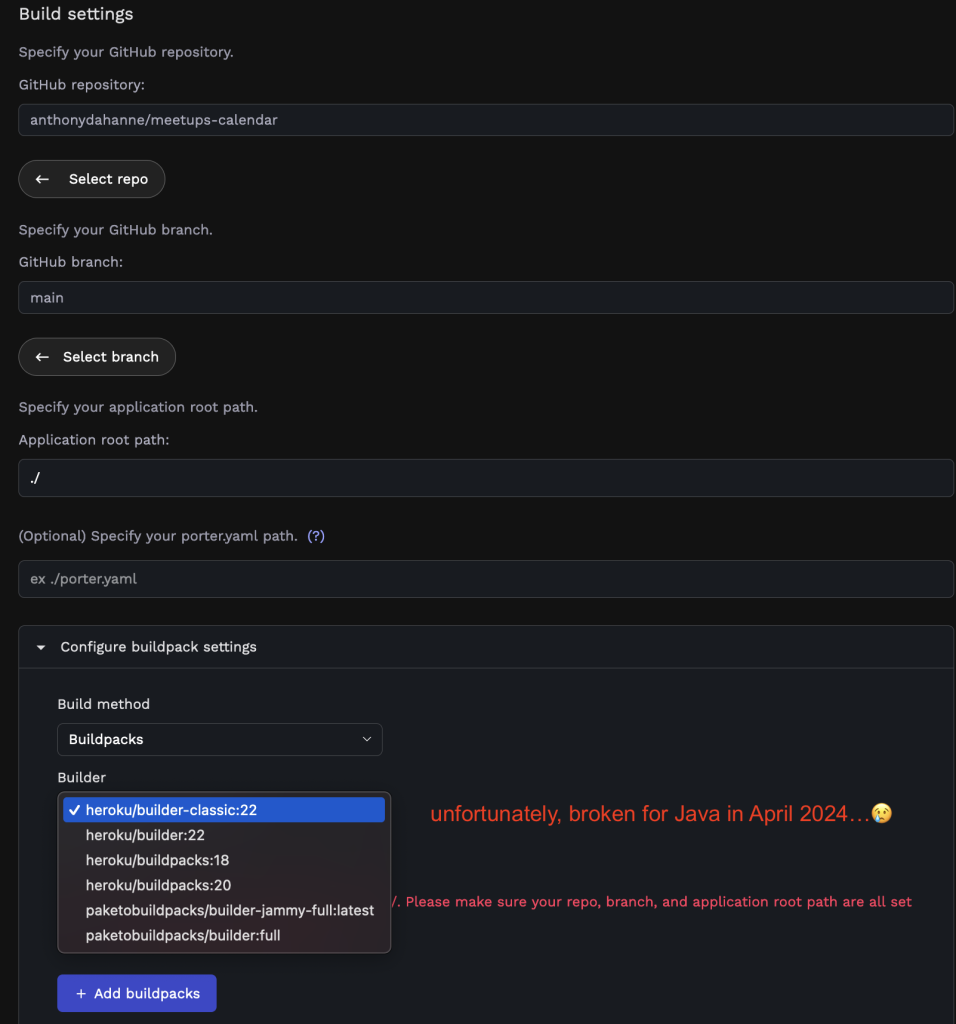

The other great news about Porter is that they support CNB V3 buildpacks, as well as a Dockerfile

Unfortunately, as of April 2024, they could not detect a Java application on Github

Railway

Railway is using NixPacks; which they also maintain.

Nixpacks is an extension of Nix, to build Container Images, generating an isolated environment with the right dependencies (with Nix) and a Dockerfile that will be built to create the Container Image.

There is a video by Dan Vega on youtube deploying a Spring Boot 3 app on Railway.

platform.sh

I haven’t tested them yet thoroughly, they are currently doing the switch towards using NixPacks as well.

Clever Cloud

I haven’t tested them yet thoroughly. They seem to be asking the user for extra configuration to build their project.

For example, with Maven, they ask the user to specify a maven.json with a list of goals and profiles; the user can then also customize the run command as well with the environment variable CC_RUN_COMMAND

The Cloud providers

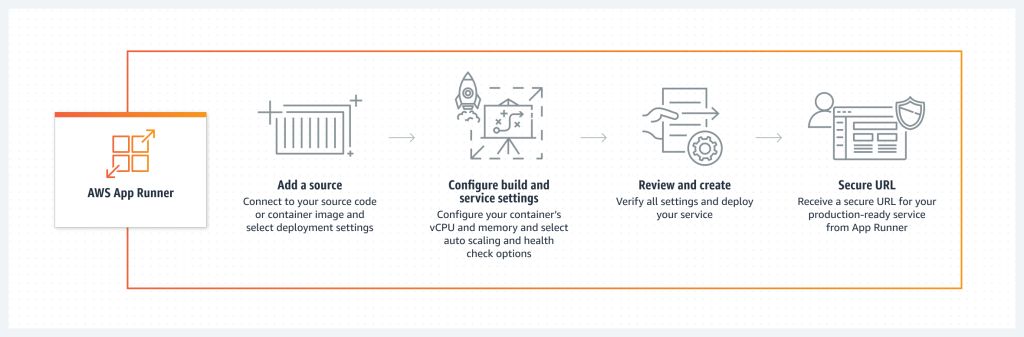

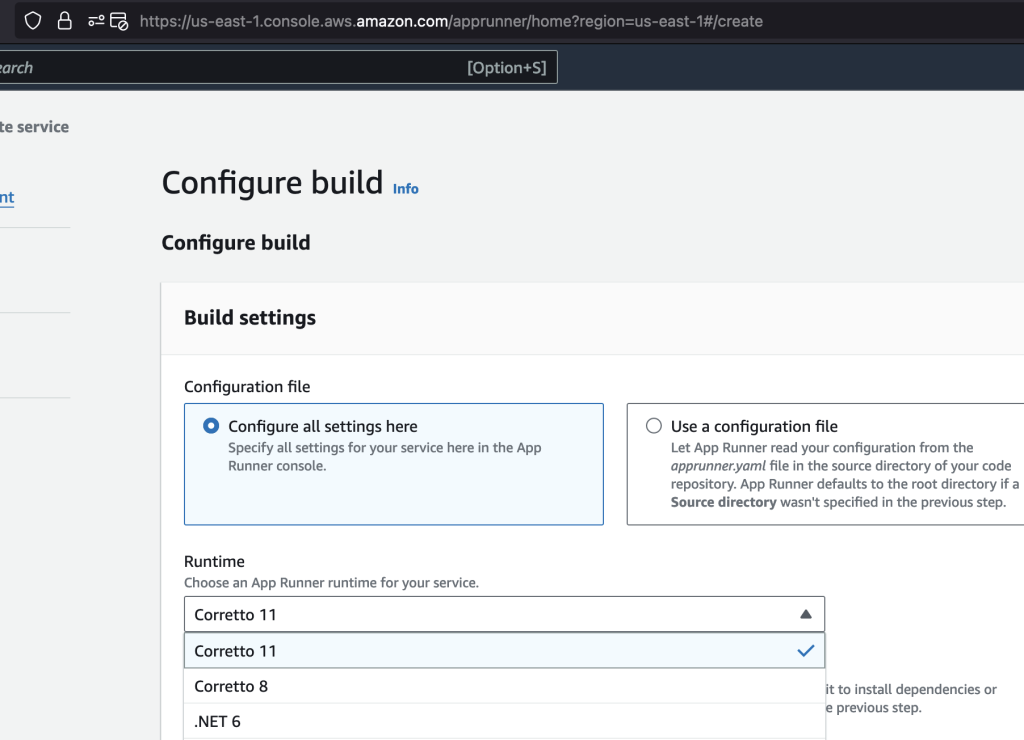

AWS : Code Catalyst, App Runner or Elastic Beanstalk?

Code Catalyst is a tool to simplify your development environment.

App Runner would be a perfect fit….

… if only it supported Java > 11 !

How in 2024, 3 years after its first release, a cloud provider still does not support Java 17 is beyond me… No modern Java app runs on Java 11 anymore, be it Spring Boot or Quarkus…

Elastic Beanstalk is our final option; a good sign is that it support Java 21 this time 🎉

But it’s much more complex to setup: this time, AWS does not hide the complexity away: the user has to set up roles, security groups, create an EC2 instance, create a Postgres instance (granted, those steps include a default, but still, it’s overhead developers don’t want to know about) and AWS won’t build the code for you, you’ll need to upload a jar of your app and also figure out the variables to inject so that your app can connect to the Postgres instance

Deprecated: Microsoft Azure: Azure Spring Apps (Enterprise)

Woops! It’s now in maintenance mode since the end of 2024, move over to Azure Container Apps which is the official replacement.

There are 2 ways to efficiently deploy Spring Boot apps to Azure: either using the regular Azure Spring Apps (ASA) or using Azure Spring Apps Enterprise (ASA-E)

The official documentation is pretty much on point; if you’re lucky enough to use the enterprise version, you’ll replace: --artifact-path with —source-path since the enterprise version is coupled with VMware Tanzu Buildpacks

|

1 2 3 4 |

az spring app deploy \ --name $AZ_SPRING_APPS_APP_NAME \ --service $AZ_SPRING_APPS_SERVICE_NAME \ --resource-group $AZ_RESOURCE_GROUP --source-path . --build-env BP_JVM_VERSION=17 |

What about bindings though?

There are 2 excellent resources in their documentation that gives you info on how to create bindings between your app and a SQL Database, without needing to copy/ paste values in environment variables.

Microsoft Azure Container Apps

They’re the follow up to Azure Spring Apps, and are still based on Paketo Buildpacks, as well as some in-house Azure Buildpacks, see this build log:

pass:

azure-buildpacks/java-buildpack-msopenjdk@0.3.3

paketo-buildpacks/syft@1.42.0

paketo-buildpacks/maven@6.15.12

paketo-buildpacks/executable-jar@6.8.3

paketo-buildpacks/apache-tomcat@7.14.2

azure-buildpacks/java-buildpack-telemetry@0.1.24

When I tried them out on February 2025, Gradle was not supported, so I only tested their own Java example based on Maven and I could connect to an Azure Postgres DB too, without too much trouble, but quite some configuration though; a promising solution I think!

Google Cloud Run

Google Cloud Run allows you to

- build your source code, using their own flavor of Cloud Native Buildpacks (V3),

- store the built artifact as an image in a container registry

- and run the container image

All you have to do is issue this one command:

|

1 |

gcloud run deploy meetups-calendar --source . |

Now, you also need to instruct their buildpacks to use Java 17, using a project.toml file:

|

1 2 3 |

[[build.env]] name = "GOOGLE_RUNTIME_VERSION" value = "17" |

The build is going to fail, because we did not set up a database; let’s do this:

|

1 |

gcloud sql instances create anthony-postgre --database-version=POSTGRES_14 --tier=db-g1-small |

Finally, we need to redeploy our app with a link to the DB, using environment variables.

Digital Ocean App Platform

I haven’t tried Digital Ocean App Platform yet; at least I know they use CNB Buildpacks (they document Heroku buildpacks, but nothing prevents you to use the Paketo buildpacks!)

The Self Hosted / running on your (cloud) infra PaaS

Kubero

https://github.com/kubero-dev/kubero

Coolify

CloudFoundry

See above chapter about « Specialized PaaS »

Epinio

Epinio is based on Paketo Buildpacks and runs on top of your Kubernetes cluster, I haven’t tried it out yet!

Tanzu Application Platform

TAP is the commercial PaaS by VMware: on top of a Kubernetes cluster, you’ll get a supported version of the Paketo Buildpacks, automatic builds with Kpack, auto redeploy with FluxCD, Istio service mesh, etc. all built and assembled for you to use.

You can test drive it for free using the Tanzu Academy Developer Sandbox.

Once you’re in there, you can follow the instructions provided, and if you want to use a Postgres DB, follow those instructions for RabbitMQ, and replace references with rabbitmq with postgres

Now, what have we learnt so far?

When I started this investigation about PaaS in 2024, I divided the PaaS into Specialized PaaS / Cloud Providers / (cloud) Self Hosted; I actually found out that this market is evolving towards those high level trends:

PaaS running on your infra

CloudFoundry, Tanzu Application Platform, Epinio, Render don’t try to compete with the Cloud Providers: they leverage their offering to focus on their main business: helping developers get to production with the minimum friction

xPacks

If you look closer at the way the « magic » is done to turn your code into a reachable service, two technologies stood out:

- Cloud Native Buildpacks: be them Heroku, Google specific, or more versatile like the OSS Paketo Buildpacks, I was happy to see their usage is really strong across all types of PaaS

- NixPack): originally created by Railway for their PaaS, they’re open source and based on NixOS – it’s a good thing to have a competitor to Cloud Native Buildpacks.

That said, the best user experience for developers is not only building their apps (which I believe is a must now) but also automatically bindings their app to the services they use (Postgres, RabbitMQ, etc.) because the best configuration is the one you don’t need to provide.